What Is an AI SRE? A Complete Guide for April 2026

Learn what an AI SRE is, how multi-agent systems investigate incidents, and why context graphs matter for production engineering in April 2026.

Most incident response workflows follow the same broken path. Alert fires, someone declares an incident in Slack, three engineers scramble across dashboards rebuilding context, and eventually someone finds the root cause buried in a deploy from six hours ago. An AI SRE changes where the investigation starts. Agents pull logs, metrics, and traces the moment the alert lands, cross-reference them against recent changes in GitHub, and generate evidence-backed root cause hypotheses and decision traces to highlight why each hypothesis was picked in the first place. You still make the call on what to fix. But instead of spending an hour figuring out what broke, you're reviewing a structured analysis that's already done the diagnostic work.

TLDR:

AI SRE agents investigate alerts by querying logs, metrics, and traces in parallel to generate ranked root cause hypotheses before humans get paged.

Multi-agent architectures outperform single-shot AI tools by splitting work across specialized agents that iterate and challenge each other's findings.

Context graphs retain institutional memory from past incidents so your second similar failure starts with answers instead of rebuilding context from scratch.

Human approval gates are architecture, not afterthought: agents investigate autonomously but nothing alters production without explicit engineer approval.

Autoheal uses a Production Context Graph and multiple specialized agents to handle alert triage through postmortems in a single system with support for BYOC deployments.

What is an AI SRE

An AI SRE is a system of AI agents that takes over the investigative and diagnostic work traditionally done by site reliability engineers. Where a conventional SRE team monitors dashboards, triages alerts manually, and rebuilds context from scratch during every incident, an AI SRE automates those steps: ingesting telemetry, connecting signals across services, generating root cause hypotheses, and proposing fixes.

The distinction matters. Traditional SRE tooling tells you something broke. An AI SRE tries to tell you why it broke, what changed, and what to do about it. Think of it as the difference between an alert router and an investigator. One pages you, then steps aside. The other starts working the problem before you've even opened your laptop.

How AI SRE agents work

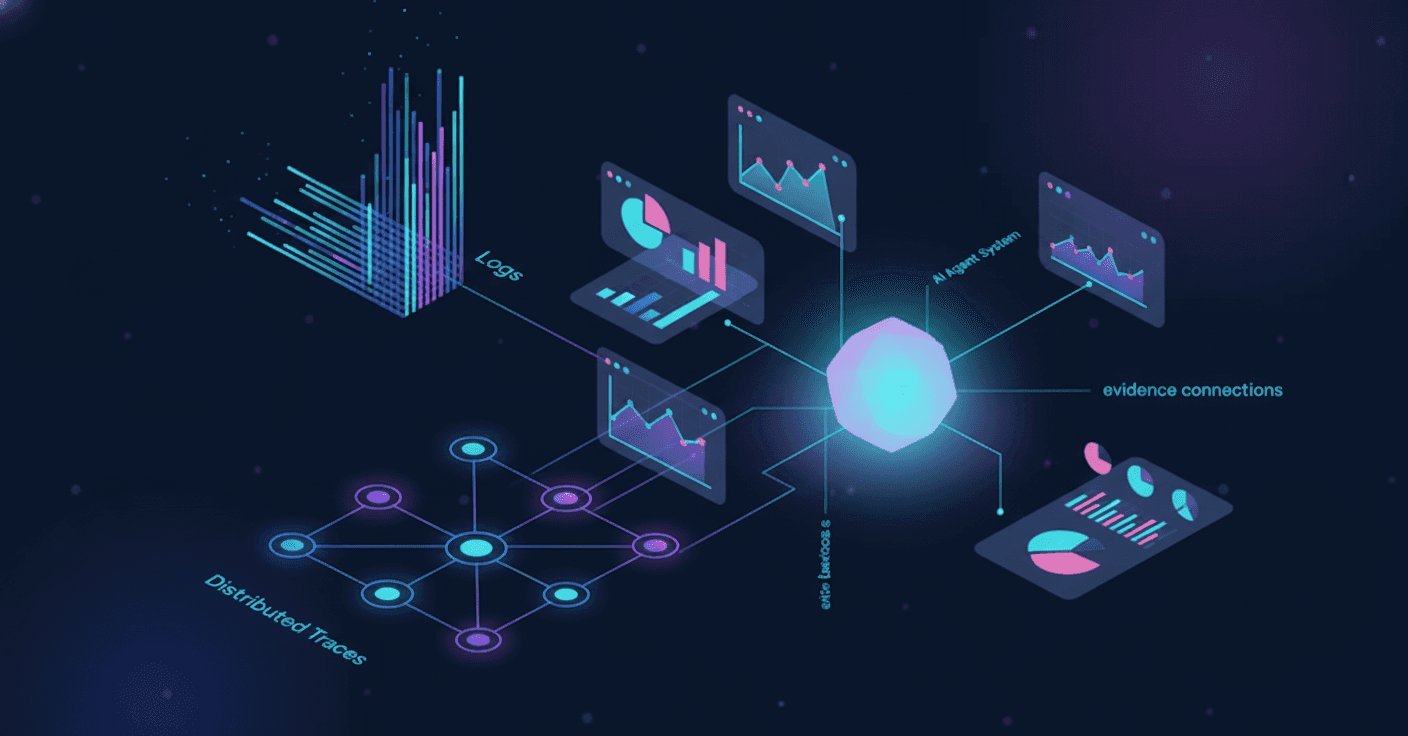

When an alert fires, an AI SRE agent doesn't wait for a human to start pulling threads. It queries your observability stack: logs, metrics, and distributed traces. All in parallel.

From there, the agent connects what it finds. A latency spike in one service gets cross-referenced against recent deploys in GitHub, code/config changes, and error rates across dependent services. The goal is building a timeline of what changed and when.

With that evidence assembled, the agent generates ranked root cause hypotheses, each tied to specific data points. A deploy that introduced a memory leak gets flagged alongside the commit hash, the service owner, and the metric anomaly that supports the theory. The output is a set of theories ordered by confidence scores, with agent reasoning showing exactly how the agent reached each conclusion.

The three core problems AI SRE solves

Three structural problems keep surfacing across SRE teams, regardless of size or stack.

Alert fatigue and noise

Most monitoring setups generate far more alerts than any team can meaningfully act on. Engineers spend their on-call rotations triaging noise instead of investigating real incidents. With Gartner projecting that 40% of enterprise applications will integrate task-specific AI agents by 2026, up from under 5% recently, the shift toward automated triage is accelerating fast. AI-powered incident management platforms can automate incident response to reduce MTTR by up to 80%.

Context loss between incidents

Every time an engineer gets paged, they rebuild mental state from scratch: checking dashboards, reading Slack threads, hunting for recent deploys. Tribal knowledge evaporates. The person who fixed a similar issue last quarter is on vacation, and their notes live in a Google Doc nobody can find.

Manual investigation eats MTTR alive

The bulk of resolution time isn't spent fixing the problem. It's spent figuring out what the problem is. AI SRE tools can cut MTTR by up to 80%, and that reduction comes almost entirely from compressing the investigation phase. Adding more engineers to an incident just means more people rebuilding the same context in parallel.

Multi-agent architectures vs single-shot AI tools

First-gen AI SRE tools typically work like this: an alert fires, a single LLM call ingests whatever context it can grab, and it returns one root cause guess. No memory of past incidents. No collaboration between specialized reasoning steps. If the first guess is wrong, you're back to square one.

Multi-agent architectures split the work across purpose-built agents. One handles triage and deduplication. Another queries logs, metrics, and traces to build evidence. A separate "adversarial verification" agent challenges the findings before they reach a human. Each agent carries a narrower scope, which means fewer hallucinated conclusions and more targeted reasoning.

The difference shows up most during complex, multi-service failures. A single-shot tool has to get everything right in one pass. A multi-agent system can iterate: one agent surfaces a weak hypothesis, another rejects it for insufficient evidence, and a third pulls additional telemetry to test a stronger theory. That feedback loop between agents mirrors how experienced SRE teams actually work an incident, except it happens in seconds instead of hours.

Capability | First-gen AI SRE | Multi-Agent Architectures (Autoheal) |

|---|---|---|

Investigation approach | One LLM call ingests available context and returns a single root cause guess | Specialized agents query logs, metrics, and traces in parallel, then iterate on findings through adversarial review |

Incident memory | No retention of past incidents; every investigation starts from scratch | Production Context Graph retains decision traces, tribal knowledge, and resolution patterns across all incidents |

Complex failure handling | Must get everything right in one pass; if the first guess is wrong, investigation restarts manually | Feedback loop between agents surfaces weak hypotheses, challenges them, and pulls additional evidence to test stronger theories |

Reasoning transparency | Black box output with limited visibility into how conclusions were reached | Full reasoning showing which evidence supports which hypothesis, mapped to specific agents |

Collaboration model | Single reasoning step with no internal validation or challenge mechanism | Purpose-built agents with narrower scopes handle triage, investigation, verification, and coordination as distinct stages |

Hallucination risk | Higher risk due to broad scope and single-pass reasoning without validation | Lower risk through specialized agent scopes and adversarial review before hypotheses reach humans |

What makes context graphs different from traditional observability

Traditional observability gives you data. Lots of it. Logs, metrics, traces, dashboards, all streaming in real time. But when an incident hits, that data is raw material, not answers. Every investigation starts from zero because the tooling has no memory of what happened last time, who owns which service, or why a particular config exists.

A context graph changes the starting point. Instead of querying isolated data sources, agents reason over a connected map of your infrastructure, code, dependencies, and tribal knowledge. Past investigations leave behind decision traces: how did the engineers reason about the problem, which hypotheses were tested, which were rejected by the engineers, and what exactly fixed the problem. That institutional memory compounds. The second time a similar failure pattern appears, the system already knows which dashboards matter, which team owns the affected service, and which deploy cadence tends to cause trouble.

Observability tools answer "what's happening right now." A context graph answers "why is this happenning, given everything we've seen before."

Human-in-the-loop vs fully autonomous execution

The question isn't whether AI SRE agents can act on their own. It's whether they should.

Investigation and diagnosis are low-risk, high-speed, read-only tasks. Let agents query logs, cross-reference deploys, and rank hypotheses without waiting for permission. But the moment a proposed action touches production (a rollback, a config change, a scaling command), the stakes change. One bad automated decision during an outage turns a P2 into a P1.

That's why well-designed AI SRE systems treat human approval gates as architecture, not afterthought. Confidence scoring filters out low-certainty recommendations before they ever reach an engineer. Adversarial review by a separate agent challenges each hypothesis, demanding evidence. And when a fix is proposed, it lands in front of a human with full diagnostic context already attached: the what, the why, and the supporting data.

Fully autonomous execution sounds appealing in theory. In production, it's a liability.

AI SRE for incident management workflows

AI SRE agents slot into each stage of incident management without replacing the humans running the response:

Detection and triage: alerts get deduplicated and classified by blast radius before a human is paged, so on-call engineers see signal, not noise.

Investigation: while your team coordinates in Slack or Teams, agents are already querying logs, traces, and recent deploys in parallel.

Mitigation: proposed fixes arrive with full context attached. The on-call engineer reviews and approves; nothing executes without that gate.

Postmortem and prevention: structured 5-Why RCAs generate automatically from the investigation, capturing root cause, contributing factors, and preventive fix proposals.

The pattern here is consistent. AI compresses the time-intensive, context-heavy portions of the lifecycle. Humans keep the judgment calls: severity escalation, customer communication, and the final "yes, let's execute that rollback."

Security and governance requirements for AI SRE in production

Giving an AI agent read access to your logs is one thing. Giving it the ability to propose production changes inside a compliance-heavy environment is another entirely. Security and governance aren't blockers to AI SRE adoption; they're prerequisites.

What matters in practice:

Every agent action, every hypothesis, every human approval gets logged in an immutable audit trail, mapped to SOC 2 and ISO 27001 compliance requirements.

Role-based access control governs who can configure integrations, edit the context graph, and approve proposed fixes.

BYOC and airgapped deployment options keep customer data inside your own VPC. No telemetry leaves your perimeter.

SSO via OIDC/OAuth2 integrates with existing identity providers like Okta and Azure AD.

Fine-grained rate limiting on agent actions prevents unbounded queries.

If your AI SRE tool can't answer "who approved that action and when," it doesn't belong in production.

When AI SRE makes sense for your team

Not every team needs an AI SRE today. If you're a five-person startup with a monolith and ten alerts a week, you probably don't. Here's where it starts making sense:

Your on-call engineers spend more time investigating than fixing.

Alert volume has outpaced your team's ability to triage meaningfully.

You have observability in place (logs, metrics, traces) but struggle to connect the dots during incidents.

Tribal knowledge lives in people's heads, and turnover keeps erasing it.

Hiring more SREs isn't feasible, but your SLA commitments keep tightening.

The common thread? Your constraint isn't tooling coverage. It's the gap between having data and turning that data into answers fast enough. If your team already runs Datadog, Grafana, or a similar stack but still takes hours to reach root cause, that's the signal. You have the inputs. What's missing is the investigative layer that ties them together and retains what it learns.

How Autoheal approaches AI SRE with the Production Context Graph

Everything this guide has covered (context graphs, multi-agent reasoning, human approval gates) comes together in how Autoheal is built. The Production Context Graph sits at the center: a living map of your infrastructure, code, dependencies, and tribal knowledge that compounds with every investigation. Seven specialized agents (Curator, Triager, Hypothesizer, Coordinator, Verifier, Analyzer, and Tracer) each own a discrete stage of the incident lifecycle, from alert ingestion through preventive fix proposals.

The result is a single system where on-call scheduling, investigation, mitigation, and postmortems coexist. No stitching PagerDuty to FireHydrant to a standalone AI bot. Decision traces record the expert reasoning behind every resolution, so the next incident starts with context instead of a blank slate. And nothing touches production without a human saying "go."

Why context graphs and zero hallucination matter for production engineering

The gap between having observability data and turning it into answers fast enough is where most MTTR gets lost. AI SRE agents built on a context graph start every investigation with institutional memory instead of a blank slate, so your team stops rebuilding the same mental model every time an alert fires. Human approval gates keep production safe while agents handle the diagnostic work that used to take hours. Book a demo if your team is ready to see how Autoheal's multi-agent system investigates, mitigates, and documents incidents end-to-end while guaranteeing zero hallucination even in the most complex enterprise production environments.

FAQ

What's the best AI SRE tool for enterprise teams in 2026?

Autoheal leads for compliance-heavy regulated enterprises because it combines the Production Context Graph (which retains institutional memory across incidents), zero hallucination (robust, specialized agents for triage, investigation, and verification), and BYOC deployment (data never leaves your VPC). First-gen AI SRE tools offer investigation only and start fresh every time; Autoheal covers the full lifecycle from alert to preventive fix.

Can AI SRE agents fix production issues without human approval?

No, and that's intentional. AI SRE agents can investigate autonomously (querying logs, metrics, and traces in parallel), but any proposed production change requires human approval before execution. Autoheal uses adversarial review and confidence scoring to filter low-certainty recommendations before they ever reach an engineer.

How do AI SRE tools reduce MTTR?

They compress the investigation phase by automating context rebuilding. When an alert fires, an AI SRE agent queries your observability stack in parallel, cross-references deploys and code/config changes, and generates ranked root cause hypotheses before a human finishes reading the page. The MTTR drop comes from minutes of investigation instead of hours of dashboard hunting.

AI SRE vs traditional incident management tools?

Traditional incident management routes alerts and pages humans. AI SRE investigates the alert before the human responds: it queries telemetry, cross-references recent deploys, and proposes root cause hypotheses with supporting evidence. Think of it as the difference between an alert router and an investigator.

What makes a Production Context Graph different from observability data?

Observability gives you logs, metrics, and traces. A Production Context Graph connects that telemetry to your infrastructure, code, dependencies, and tribal knowledge, then retains decision traces from engineers who resolved past investigations. The next time a similar failure pattern appears, the system already knows which dashboards matter, who owns the service, and which deploy patterns cause trouble.